Measuring and Optimizing Computer Networks

by Kelley Weiss

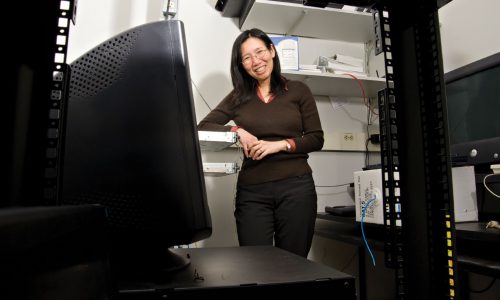

Monitor. Analyze. Learn. Improve. Then repeat. Chen-Nee Chuah says she’s applied this approach to most things in her life, even in childhood.

She says her need to bring order to her environment would drive her parents crazy. But she says she came by it naturally. Chuah grew up in Malaysia and her mom and dad owned a grocery store. They had to manage supply and demand every day and didn’t use a calculator when ringing up customers and giving them change. Her parents were not formally educated but of their six children, half of them went on to become engineering professors.

It’s fitting that now as a professor in the UC Davis Department of Electrical and Computer Engineering Chuah has taken on the task of improving the sprawling and almost impenetrable network powering our computers and smartphones.

She leads the Robust and Ubiquitous Networking (RUBINET) Research Group and is an ACM Distinguished Scientist and a Fellow of the Institute of Electrical and Electronic Engineers (IEEE).

She compares her work monitoring the network to managing vehicle traffic. As people stream videos on their phones and send emails each one of those actions creates what’s called a packet. And millions of these packets have to make it to a destination.

“Network routers are like the intersection and they decide where to send the packets next, and different routers together send it on a path. If you send too much traffic, then the routers get overloaded and congested and start to drop packets.”

So Chuah is analyzing the network to improve its function.

“Given the traffic demand we want to know how to route this traffic through your network so that we minimize the delay and loss for the user,” she says. “And at the same time, from the provider’s perspective, you don’t want to overload the link because if the link fails you have to reroute the packet.”

And when a packet is rerouted it causes a delay for the customer or can result in dropped packets, which can lead to a dropped call, lost video frame, or data download failure.

When Chuah was completing her Ph.D. at UC Berkeley, her research was focused on Quality of Service (QOS), particularly investigating the health of the core network. She built upon her doctoral research when she first worked at Sprint and then became a professor at UC Davis in 2002.

“We had to design a software that pretended to be a router so that we could listen in to the router messages and then piece together what was happening,” she says. “This was the first project I did with Sprint and was part of my Career Proposal with the National Science Foundation that was looking at routing failures. We came up with a failure restoration mechanism that can minimize the disruption during link/node failures.”

From there Chuah went on to work on developing a way to actually measure network traffic and network performance. She says because the network has become so complex it is nearly impossible to track packets of information individually because there is too much data running 24/7.

“When the internet was designed the idea was ‘let’s keep the network simple,’” Chuah says. “But the intelligence has been pushed to the edge. Now, the trend starts to reverse, and with software defined networks (SDN) and network function virtualization (NFV), new network functions and services are being embedded in the network itself.”

To try and measure network traffic, Chuah first used a passive observation method that relied on sampling streams that would not interfere with traffic forwarding.

“It’s a production network so you cannot stop forwarding normal customer traffic while collecting measurements. You have to design measurement methods that are non-intrusive,” she says.

However, this method can be very limiting depending on the network monitoring applications. First, the sampling rate itself needs to be adaptive given that network traffic is constantly fluctuating. Second, sampling often results in bias towards heavy flows that generate a lot of packets and loses out on small flows (e.g., single scanning packets). However, information on the latter is often important for security analysis/detection.

From 2008 to 2012 Chuah worked with colleagues at UC San Diego, the Georgia Institute of Technology and the University of Minnesota to develop a more advanced method called Programmable MEasurement (ProgME). This enabled programmable module measurement on each node and provided much more adaptability.

“So you can adapt a rate and ask, ‘how fast do you want to sample?’” she says. “Or, which traffic population do I want to sample? For security detection, maybe I don’t care about most of the traffic but if it’s coming from a specific IP address or port range then I want to pay more attention.”

In addition to the challenges of measuring such a high volume of information on the network, Chuah says in the past it was difficult to instrument novel learning or measurement techniques on the router itself due to proprietary implementation details. With the advent of software defined networking and the introduction of Open Flow protocols, more router vendors can support the capability to talk to the router and program it remotely through Open Flow APIs.

“It’s a perfect match for our work because previously we had to build our programmable measurement modules on reprogrammable hardware,” she says. “But now I can map what I wanted to do originally for programmable measurements onto SDN (Software Defined Networking) routers.”

Chuah says this measurement advancement brings up a more interesting question.

“Now that I have this capability, the next question is how do I program the router on-the-fly to react to changing network and traffic conditions?” she says. “It’s an online learning problem where we need to monitor the traffic, analyze and learn from it, and drive the optimal measurement rules for the next time window.”

This is the focus of a recently completed NSF project called Learn, Adapt and Profile (LeAP), led by Chuah, along with her colleague Qing Zhao, a professor in the UC Davis Department of Electrical and Computer Engineering, as well as a collaborator at Hewlett-Packard Laboratories.

This is another step in her journey to find new and better ways to analyze and improve our computer networks.